Thumb Stop Ratio Doesn't Seem Worth Focusing On (But We're Still Testing It)

- jchopak

- Aug 23, 2022

- 3 min read

Back in the spring we started using Motion. One of the key metrics that they emphasize is thumb stop ratio (TSR), which is 3-second video views divided by impressions. By increasing TSR and holding click to purchase conversion rate steady, CAC should decrease. The math and theory make sense. But upon closer look, it doesn't seem that TSR and ROAS are closely linked. (Plus, I'm hesitant to put too much emphasis on a metric that can't be used on all of our ads - in this case we can't use TSR on our static ads).

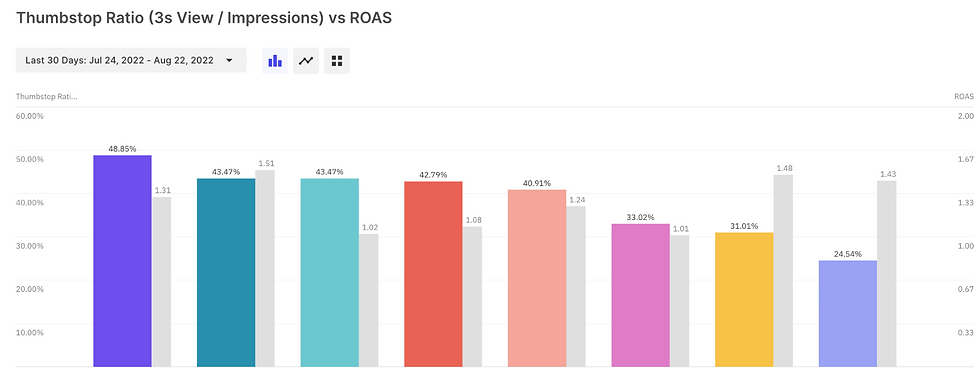

Let's look at 3 client examples. In each chart, the colorful bars represent TSR. The corresponding grey bars represent the ROAS for that ad. Each graph is sorted in descending order of TSR. (Note: ads with a 0% TSR are static images).

CLIENT A

CLIENT B

CLIENT C

If TSR and ROAS were perfectly correlated, we would see the grey ROAS bars get smaller from left to right the same way the TSR bars do. Instead, it's totally up and down. Just going off the eyeball test it's pretty clear that the relationship is weak.

We took it a step further and ran a simple regression analysis to help us visualize the relationship. With an R squared of 0.254, the relationship is super weak based on the data we have.

With one client, we are actively testing the TSR hypothesis. We took an ad that we felt was not optimized for TSR (it was well below average for the account) but historically performed well from a ROAS standpoint (above average for the account). We kept the core elements of the video the same, but tried two types of variants: 3 variants that use the same core footage but different intro (the first 3 seconds ish) and 3 variants that are the exact same footage but with a different, more optimized thumbnail.

Again, the thinking is simple: if we can identify an ad variant that has a higher TSR, it will likely have the same conversion rate (since it's so similar to the control) and therefore a stronger CAC & ROAS. If it works, it could be a fairly easy way to improve the ROAS on our existing ads/assets and reduce the need to create as many new ads.

But in practice, that has not played out in this test. Part of the issue is that the algorithm has funneled spend into the control, so our test variants are not getting enough data to really know what's going on here. And while the control does have a strong TSR, it still isn't the highest TSR in any breakdown. And perhaps most importantly, the spend distribution does not seem to have any relationship to TSR variance. It seems like the spend distribution is more related to the algorithm's familiarity with this variant rather than its own merits.

Take a look:

Because of the absurd spend allocation toward the control, we've actually gone ahead and shut off the control entirely to give the variants more space to spend so we can re-evaluate on more even ground. This is a pretty extreme measure by our standards, since we normally would just call the test with the control as the winner. But intuitively as marketers and platform experts we have reason to believe the control really should not be the winner here, so we at least want to be vigilant in stress testing this.

There are all sorts of ways you can poke holes here. There are lots of variables at play, including spend, purchase volume, etc. So this data is not conclusive or proof of anything. But at the very least it does demonstrate that we should treat TSR with skepticism, and remember that there's still nothing that can stand in as a reliable substitute for CAC or ROAS.

Comments